The AI System Spectrum

A Framework for Classifying AI Systems and Understanding Agentic Platforms

Framing Principle

This document serves two purposes:

To help readers correctly classify AI systems — so investors, customers, advisors, and teams do not collapse fundamentally different products into the same category.

To explain how AI‑native platforms create value — by embedding AI as the core operating engine of execution, not as a feature or add‑on.

This document does not assume AGI, self‑aware systems, or model self‑training. It refers to practical, deployable AI systems operating in today’s commercial environments.

Clarifying the Term “Intelligence”

Because the word intelligence can imply AGI or human‑level cognition, we distinguish between two very different forms:

Model Intelligence — The reasoning capability of an LLM or model component (pattern recognition, language generation, prediction).

Operational Intelligence — The structured memory, decision logic, constraints, feedback loops, and execution systems that surround the model.

AI‑native platforms rely on operational intelligence, not model self‑awareness or autonomous self‑improvement. The model provides reasoning capability; the platform provides persistence, orchestration, and accountability.

The structure is intentional:

Parts I–VI establish classification and system foundations.

Parts VII–VIII explain operational and economic consequences.

Part IX is an optional investor appendix.

PART I: Why Classification Matters

Why the AI Spectrum Exists

“AI” has become one of the most overloaded terms in technology.

Today, everything from a simple LLM-powered text box to full operational automation is labeled AI. As a result, fundamentally different systems are compared as if they are equivalent.

The core issue is not hype. It is misclassification.

When AI systems are evaluated without a shared framework:

Wrappers are mistaken for platforms

Output generators are confused with execution systems

Early traction is conflated with structural defensibility

AI systems do not gradually evolve into something else. They are architected from the start as a wrapper, tool, system, or platform.

The AI Spectrum exists to create durable clarity.

PART II: The AI Spectrum (System Classification)

The AI Spectrum

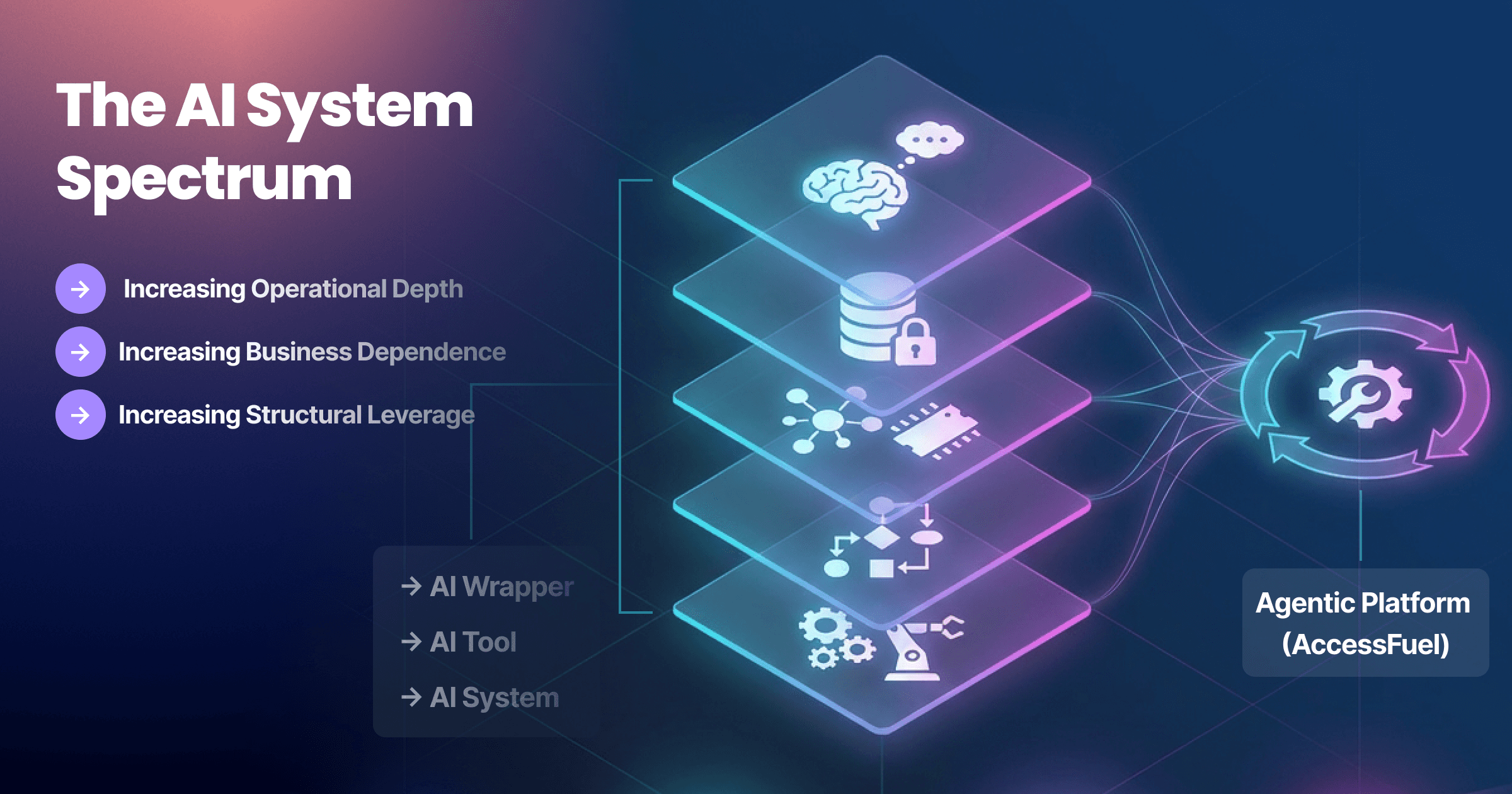

All AI products fall somewhere along the following structural spectrum:

AI Wrapper → AI Tool → AI System → Agentic Platform

These are architectural categories — not maturity stages.

AI Wrappers

AI Wrappers are thin interfaces layered on top of third‑party models.

They:

Are stateless

Do not retain long‑term operational memory

Do not execute real‑world actions independently

Depend almost entirely on external models

They can be useful. But they do not own execution, memory, or domain logic.

Example: A chat interface built on top of a general LLM.

AI Tools

AI Tools apply AI to a defined task.

They:

Solve a narrow job

Use limited business context

Generate outputs but rely on humans to decide and execute

They improve productivity, but they do not embed AI into operations.

Example: AI copy generators or summarization tools.

AI Systems

AI Systems coordinate multiple components.

They:

Maintain some persistence

Incorporate feedback signals

Provide insight across workflows

However, most AI systems stop at analysis. Humans still carry out execution.

AI‑Native Platforms

AI‑Native Platforms embed AI directly into operational workflows.

In AI‑native platforms:

AI is the core engine, not an add‑on feature

Decision logic is embedded into execution

Memory and state persist over time

Actions can be initiated and sequenced by the system

These platforms behave less like productivity tools and more like operating layers for execution.

Why this matters:

Most confusion — including valuation confusion — comes from collapsing these categories.

PART III: What Makes a Platform AI‑Native

The Five Structural Requirements (MC-FOR)

Not every system that uses AI is AI‑native.

To qualify as AI‑native, a platform must satisfy all five of the following structural requirements.

1. Persistent, Compounding Operational Memory

AI‑native platforms retain structured operational learnings from prior decisions and outcomes.

Important clarification:

The underlying model (LLM) does not retrain itself automatically.

The platform stores structured decision history, constraints, outcomes, and business logic in persistent systems (e.g., databases, rule engines, memory layers).

This is an example of operational intelligence, not AGI.

In plain terms: the system does not start from zero each time. It builds institutional knowledge.

Failure mode: Stateless systems that generate answers but never improve structurally.

2. Embedded Data Gravity

AI‑native platforms accumulate proprietary business context and historical execution data.

Switching platforms means losing operational knowledge — not just switching software.

Failure mode: Generic data inputs that create interchangeable systems.

3. Closed Feedback Loops

The system connects:

Decision → Action → Outcome → Adjustment

Results are captured and used to refine future execution.

Failure mode: One‑directional generation with no awareness of results.

4. System‑Level Orchestration (Agentic or Deterministic)

AI‑native platforms coordinate workflows across tools and systems.

Important clarification:

An AI‑native platform may use multi‑agent architectures.

It may also use deterministic orchestration with embedded decision logic.

“Agentic” does not mean AGI or autonomous consciousness.

What matters is this:

The system can plan, sequence, and initiate actions over time in pursuit of defined goals.

Failure mode: AI outputs that still require full human interpretation and coordination.

5. ROI That Scales with Embedded AI

As operational intelligence compounds and execution improves, returns increase.

Value is tied to work absorbed and outcomes improved — not seat count alone.

Failure mode: AI as a cosmetic feature that does not change operating leverage.

PART IV: How AI‑Native Platforms Work (Conceptual View)

AI‑native platforms integrate five layers:

Reasoning (LLMs or model components)

Business Context

Persistent Memory

Decision Logic

Execution Orchestration

The innovation is not the model alone. It is the integration of these layers into a continuously improving operational system.

PART V: From Insight to Execution

Traditional software stops at insight.

AI‑native platforms move from:

Insight → Recommendation → Human Execution

to:

Goal → System Decision → System Execution → Measured Outcome

Execution becomes repeatable, measurable, and continuously refined.

This is the shift from assistance to operational embedding.

PART VI: AI Operating System Depth

How Deep Does AI Run Inside the Business?

Not all AI runs at the same depth inside a company.

Many systems assist humans. Some coordinate workflows. Very few operate at the core of the business operating system.

The real distinction is not how “intelligent” a system appears — it is how deeply AI is embedded into execution.

We call this AI Operating System Depth.

Dimension | Surface Layer (Assistive) | Workflow Layer (Coordinated) | OS Layer (Embedded) |

|---|---|---|---|

Role of AI | Advisor | Co-Pilot | Operating Engine |

Decision Authority | Human-led | Shared | System-led (within constraints) |

Execution Initiation | Human-triggered | Conditional automation | Goal-driven system initiation |

Memory Persistence | Session-based | Contextual | Structural & compounding |

Feedback Integration | Manual review | Partial loop | Closed-loop refinement |

Operational Impact | Task productivity | Workflow optimization | Labor absorption |

Business Dependency | Low | Moderate | High (OS-level reliance) |

Most production AI today operates at the Surface or Workflow layer.

Agentic platforms operate at OS depth — where AI becomes the operating engine for defined business outcomes.

Depth drives leverage.

The deeper AI runs in the operating system, the more structural its impact becomes.

PART VII: Vertical Intelligence + Horizontal Orchestration

Agentic platforms must combine:

Vertical depth (domain constraints and guardrails)

Horizontal coordination (end-to-end workflow control)

Vertical without horizontal stalls.

Horizontal without vertical misfires.

Combining both creates defensibility.

PART VIII: From Software‑as‑a‑Service to Service‑as‑Software

When Agentic platforms reach OS depth, a structural economic shift occurs.

Traditional software‑as‑a‑service (SaaS) provides access to tools. Humans use those tools to perform work.

Agentic platforms deliver defined portions of the work itself.

This is the transition from software‑as‑a‑service to service‑as‑software.

SaaS: Access to Tools

In the traditional SaaS model:

Value is tied to user access (seats or licenses)

Humans remain the primary decision‑makers

Execution is manual, even if assisted

Pricing scales with headcount

SaaS improves productivity, but it does not fundamentally alter operating structure.

Service‑as‑Software: Delivery of Work

In a service‑as‑software model:

The system executes defined workflows autonomously within constraints

Work previously performed by humans is absorbed by the platform

Value is tied to outcomes delivered or operating leverage gained

Pricing increasingly reflects impact, not seat count

The question shifts from:

“How many users need access?”

To:

“How much work is the system absorbing — and how critical is that work?”

Why Seat‑Based Pricing Breaks

Seat‑based pricing made sense when software amplified human labor.

As Agentic platforms absorb labor directly:

One system may replace the output of multiple roles

Value scales non‑linearly with execution depth

Marginal execution improves as operational memory compounds

Seat count becomes a weak proxy for value.

This is not a pricing innovation. It is a structural reclassification of what is being sold.

SaaS sells access to capability. Service‑as‑software sells delivered outcomes.

PART IX: Accountability Shift

SaaS vendors are accountable for uptime.

Agentic platforms are accountable for correctness of execution.

When systems perform work, errors are system failures — not user errors.

Accuracy thresholds create structural defensibility.

PART X: Investor Appendix

Agentic platforms diverge from SaaS valuation logic because:

Work absorbed replaces labor budgets

Returns scale non-linearly as operational intelligence compounds

Marginal execution improves as memory structures mature

Investors must evaluate system leverage and embedded operational intelligence — not just revenue multiples.

Where AccessFuel Fits

AccessFuel is architected as an Agentic platform operating at OS depth.

AI is embedded into execution logic.

Persistent operational memory is retained.

Decision workflows are orchestrated across systems.

Closed feedback loops refine future execution.

AccessFuel is not a simple LLM wrapper or feature layer.

It is designed to function as an operating system layer for growth and execution.